|

Ajay Rajendra Kumar I am currently pursuing research with Prof. Lorenzo Torresani's Lab. I graduated from Northeastern University with a Master's in Computer Science. I did my Bachelor's in Computer Science from Manipal Institute of Technology, Manipal. |

|

ResearchMy research spans multimodal learning and Simultaneous Localization and Mapping (SLAM). I am currently focused on video understanding with vision–language models (VLMs). I aim to improve their spatial and temporal reasoning capabilities using visual grounding, agentic architectures, and expert tools. At Prof. Huaizu Jiang's Visual Intelligence Lab, I worked on SLAM — first on visual odometry with voxel representations, then on building a dense visual SLAM system capable of running on edge devices. |

|

NeuSLAM: Dense Visual SLAM on Edge Devices

Aniket Gupta, Tianye Ding, Ajay Rajendra Kumar, Dennis Giaya, Pia Bideau, Charles Saunders, Qu Cao, Aruni RoyChowdhury, Hanumant Singh, Huaizu Jiang Under Review at IROS 2026 | ICRA 2026 Workshop: From Sea to Space | CVPR 2026 Demo Track A dense visual SLAM system designed specifically for stereo and RGB-D sensors, optimized to run real-time on resource-constrained edge devices. |

|

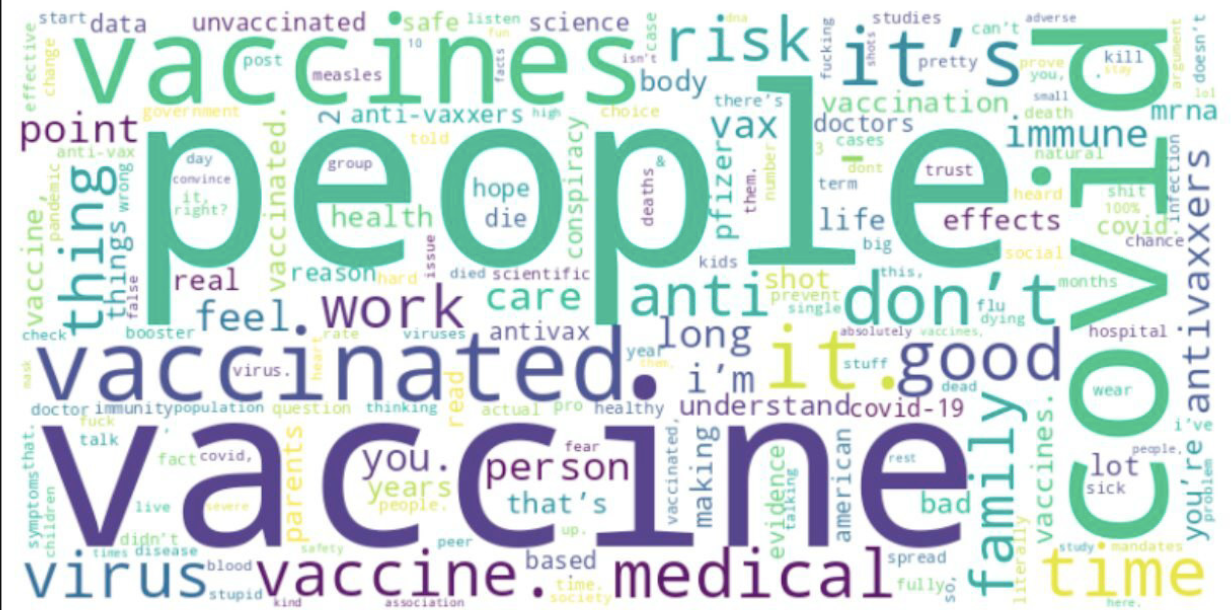

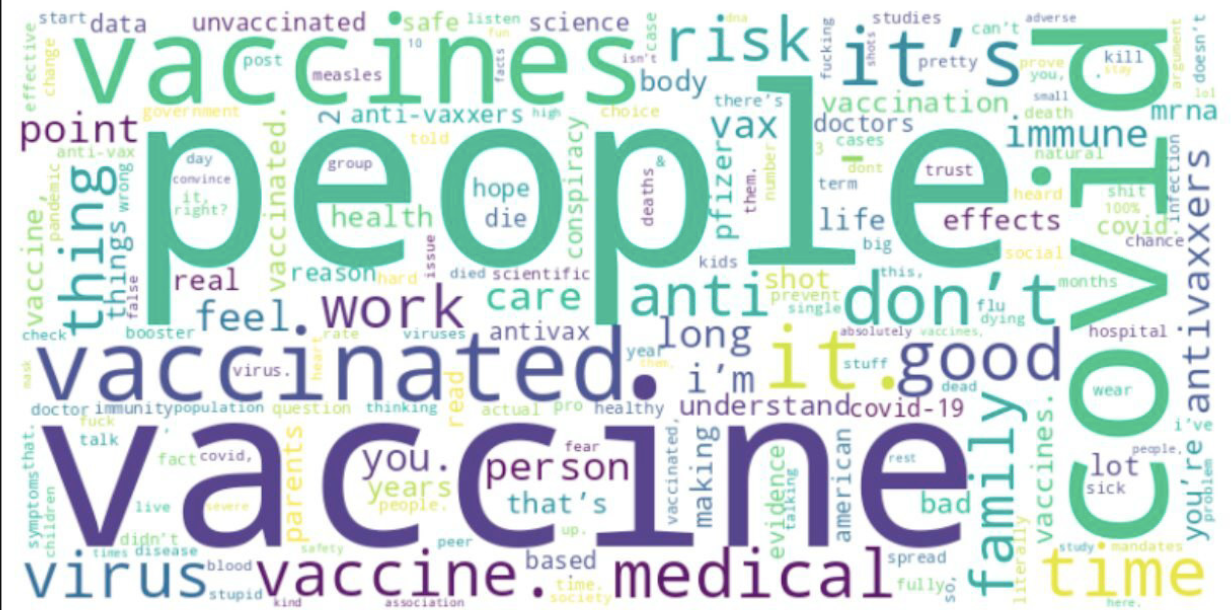

Addressing Vaccine Misinformation on Social Media by Leveraging Transformers and User Association Dynamics

C. Rao*, G. M. Prabhu*, Ajay Rajendra Kumar*, S. Gupta*, N. P. Shetty International Conference on Machine Learning & Data Engineering (ICMLDE), 2023 paper Combines transformer-based language models with user association dynamics to detect and counter vaccine misinformation on social media platforms. |

|

Design and source code from Jon Barron's website |